Does Your Software Count as High-Risk AI? A Practical EU AI Act Guide

Your engineering team just shipped a new AI feature. It screens job applicants, ranks them by predicted fit, and surfaces a shortlist for the hiring manager. You didn't think of it as "regulated" — it's just a scoring model. But under the EU AI Act, which enters full enforcement on August 2, 2026, that feature is almost certainly a high-risk AI system — subject to mandatory risk assessments, technical documentation, human oversight mechanisms, and EU database registration before it touches a single CV.

If you haven't classified your AI systems yet, you are behind. The deadline is not theoretical.

Key takeaways:

- The EU AI Act entered force on August 1, 2024 and the enforcement of the majority of its provisions commences August 2, 2026.

- Penalties are structured in tiers: up to €35 million or 7% of global annual turnover for prohibited practices, up to €15 million or 3% for high-risk non-compliance, and up to €7.5 million or 1% for supplying incorrect information.

- Classification as high-risk is determined by what your system does and where it does it — not by how you've labelled it internally.

What "High-Risk AI" Actually Means

Most explanations of the EU AI Act list its four risk tiers and leave it there. That isn't useful when your compliance team is staring at a product roadmap trying to figure out which items require legal review. Here is what the framework actually means in practice.

The EU AI Act takes a tiered approach to regulating AI based on the level of risk it poses, ensuring stricter controls for potentially dangerous AI while allowing innovation to flourish in lower-risk areas. The four tiers are: unacceptable risk (prohibited outright), high risk (heavily regulated), limited risk (transparency obligations only), and minimal risk (essentially unrestricted). The critical question for most software teams is not whether they're building prohibited AI — that's usually obvious — but whether they're building high-risk AI without knowing it.

High-risk status is not self-declared. An AI system is considered high-risk under the AI Act if it meets one of these criteria: it serves as a safety component or is a product covered by specific EU laws in Annex I and must pass a third-party conformity assessment; or it is listed in Annex III. Annex III is the list that will catch most commercial software teams off guard, because it defines high-risk not by technical sophistication but by deployment context and decision-making impact.

Understanding the full EU AI Act compliance requirements gives essential background on how the risk tiers interact before walking through the classification logic below.

Step 1: Is Your System an "AI System" at All?

Before asking whether your software is high-risk, you need to confirm it qualifies as an AI system under the Act's definition. This matters because traditional rule-based software — decision trees with hard-coded logic, simple IF/THEN rule engines — may fall outside scope entirely.

The Act defines an AI system as a machine-based system designed to operate with varying levels of autonomy that, based on the inputs it receives, infers from those inputs how to generate outputs such as predictions, content, recommendations, or decisions that can influence real or virtual environments. The key words are infers and varying levels of autonomy. A system that applies fixed programmatic rules set by a human does not infer — it executes. A system that uses machine learning, neural networks, or statistical inference to generate outputs does.

In practice: a fraud detection engine that uses logistic regression on transaction features is an AI system. A rules engine that flags transactions over €10,000 from new accounts is probably not. Most modern AI features — ML-based scoring, LLM-powered recommendations, computer vision classifiers — will qualify.

Step 2: Does It Fall Under Prohibited Practices?

Before getting to high-risk classification, check whether your system might be prohibited under Article 5. Prohibited AI cannot be made compliant through documentation or oversight — it must be removed from the market.

Prohibited categories include social scoring systems that evaluate individuals based on behaviour or characteristics to determine access to services or opportunities, real-time remote biometric identification in public spaces (with narrow law enforcement exceptions), AI systems that exploit psychological vulnerabilities to manipulate behaviour, and emotion recognition in workplace and educational settings.

The EU AI Act introduces outright bans on emotion recognition in workplaces and schools, fundamentally reshaping how these technologies can be deployed. If your product roadmap includes any of these features targeting EU users, the conversation is about product architecture, not compliance controls.

Step 3: Does It Match an Annex III Use Case?

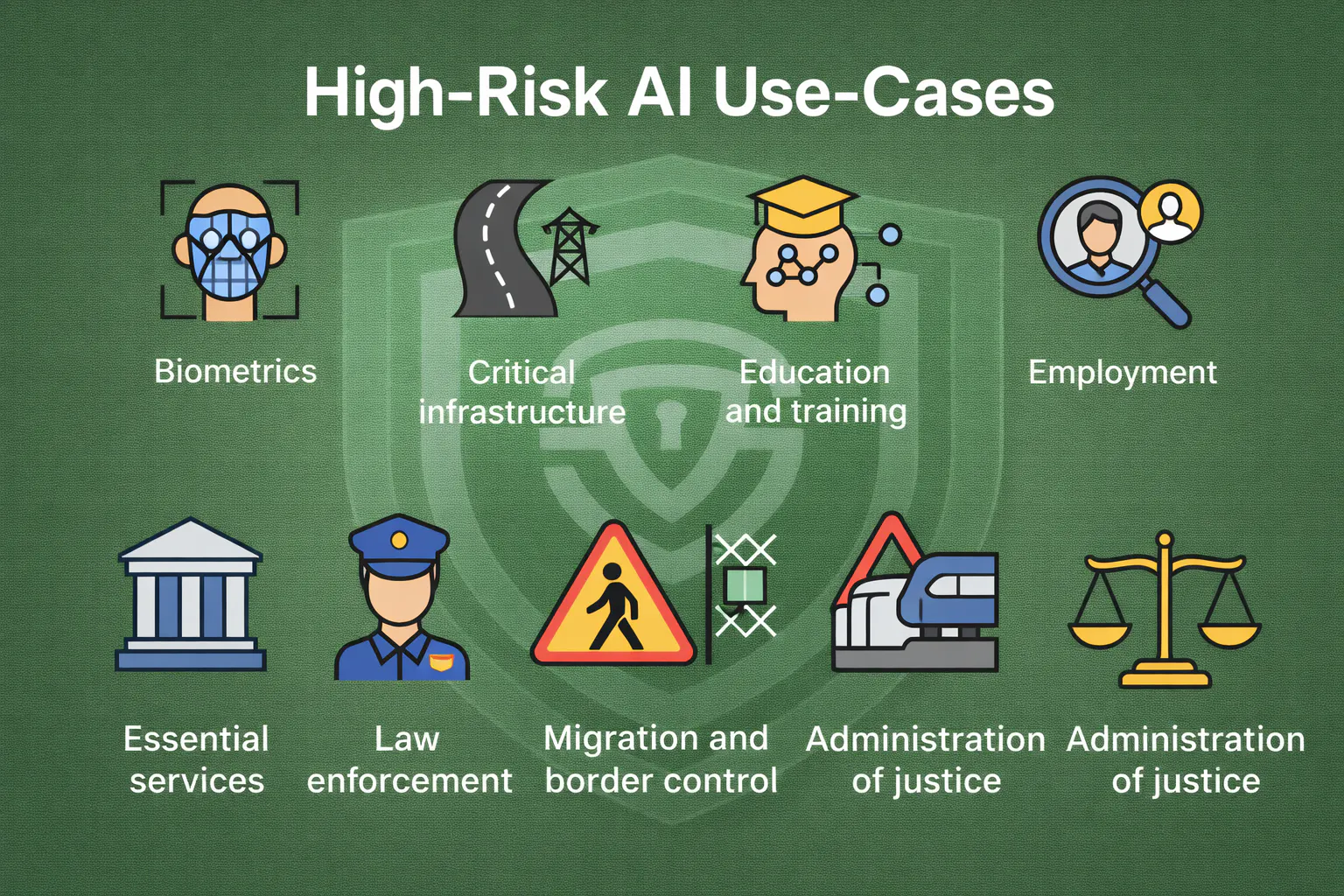

This is the classification decision that will affect the most companies. Annex III lists eight key use cases for AI systems that are considered high-risk if they pose a significant risk of harm to the health, safety or fundamental rights of natural persons. These are: biometric identification and categorisation; critical infrastructure management; education and vocational training; employment and worker management; access to essential services; law enforcement; migration and border control; and administration of justice and democratic processes.

The classification logic is use-case-first, not technology-first. A CV parsing model sounds benign. When it ranks candidates and that ranking influences hiring decisions for EU-based employees, it is Annex III high-risk. The classification question is: what decision does this system inform or make, and in which domain?

AI systems listed under Annex III are always considered high-risk if they profile individuals — meaning automated processing of personal data to assess various aspects of a person's life, such as work performance, economic situation, health, preferences, interests, reliability, behaviour, location or movement. This is the clause that pulls in fraud scoring, credit assessment, insurance pricing models, and a wide range of analytics products whose providers have not thought of themselves as being in regulated territory.

One important escape hatch exists. By derogation from paragraph 2, an AI system referred to in Annex III shall not be considered high-risk where it does not pose a significant risk of harm to the health, safety or fundamental rights of natural persons, including by not materially influencing the outcome of decision making. A provider who wants to claim this derogation must document the assessment before market entry and register the system — a paper trail regulators can audit.

Step 4: Is It a Safety Component in a Regulated Product?

The second pathway to high-risk classification catches AI embedded in physical products. An AI system is high-risk if it is a safety component of a product that already falls under EU sector rules (like the Machinery Directive, Medical Devices Regulation, etc.) and thus must undergo third-party conformity assessment. This affects AI in medical devices, autonomous vehicles, aviation systems, industrial machinery, and railway infrastructure. If you are building AI that goes inside hardware governed by CE marking requirements, you are almost certainly building high-risk AI.

Real Examples: Where Most Software Teams Land

AI hiring or recruitment tool. This is the clearest high-risk classification in commercial software. CV screening, candidate ranking, interview scoring, and even tools that merely surface candidates for human review all fall under Annex III's employment category. If your business uses AI to screen, rank, or match candidates, the EU now regulates those tools as high-risk systems. There is no size or revenue threshold — a ten-person startup using an AI-powered ATS to screen EU applicants is in scope.

Credit scoring or insurance pricing model. Access to essential services — AI for social benefits, credit scoring, insurance, healthcare triage — is in scope under Annex III. A SaaS fintech that offers an AI-powered creditworthiness API to European lenders is a provider of a high-risk AI system, even if the lender is the one making the final credit decision.

General-purpose chatbot or LLM assistant. A customer support chatbot, a writing assistant, or an internal knowledge tool is generally not high-risk unless it is deployed in a high-risk context — such as providing medical triage, legal guidance in enforcement settings, or making eligibility determinations for public services. The system's deployment context matters as much as its technical architecture. AI systems that interact with humans (chatbots, virtual assistants) carry transparency requirements but don't face the full compliance burden of high-risk systems. The transparency requirement means users must be told they are interacting with an AI, but this is far less onerous than the full Annex III obligation stack.

SaaS analytics platform. A business intelligence platform that surfaces trends and correlations for human analysts to act on is typically not high-risk, provided it doesn't profile individuals in Annex III domains. Where analytics platforms get pulled in is when they segment, score, or rank individuals in ways that feed employment, credit, or benefits decisions — even indirectly.

Fraud detection system. This sits in a genuine grey zone. AI systems detecting financial fraud are specifically excluded from the "access to essential services" Annex III category. However, if the same model is used not just for fraud detection but to assess creditworthiness or determine account access, the scope expands. The use case determines the classification, and multi-purpose models may carry multi-tier obligations.

For a deeper look at the technical obligations that flow from these classifications, the EU AI Act for CTOs guide translates the compliance requirements into engineering terms.

The Grey Areas: GPAI, APIs, and Dual-Use Systems

General-purpose AI models — foundation models like large language models — have their own compliance track under the Act. They are not classified as high-risk by default, but when a GPAI model is integrated into a high-risk AI system, the general-purpose AI model integrated into that high-risk system is subject to both high-risk AI system requirements and general-purpose AI model requirements. A company deploying GPT-4 or Claude as the engine behind an AI recruitment tool carries obligations for both the GPAI layer and the high-risk system layer.

APIs present a related classification challenge. If you provide an AI API that clients can deploy in any context, your classification depends on what clients actually do with it. The Act distinguishes between providers (who build and place AI systems on the market) and deployers (who use them in specific contexts). An API provider is likely a provider under the Act; the deployer bears separate obligations for the context they create. Contracts and terms of service that restrict high-risk deployment contexts are not a legal shield — the Act imposes obligations on the chain, not just the end user.

The overlap between AI regulation and data protection law creates an additional layer of exposure. With GDPR penalties reaching up to 4% of global annual revenue or €20 million, and the EU AI Act introducing additional enforcement mechanisms, the financial implications of non-compliance are substantial. AI systems that process personal data to inform high-risk decisions sit simultaneously under GDPR's Article 22 automated decision-making restrictions and the AI Act's high-risk obligations. A single product can trigger both regimes. The intersection of AI and GDPR compliance is one of the more complex areas where organisations currently have the least mature governance.

What High-Risk Classification Actually Requires

If your system is high-risk, classification is not the end of the journey — it is the beginning. From August 2, 2026, organisations deploying high-risk AI systems in the Annex III categories must demonstrate full compliance with Articles 9 through 49. This means documented, ongoing risk management systems under Article 9; training data governance under Article 10; complete technical documentation under Article 11; automatic logging of system events under Article 12; transparency obligations under Articles 13 and 14; human oversight mechanisms; accuracy, robustness, and cybersecurity controls; a quality management system; conformity assessment before market entry; and EU database registration.

Each of these is an operational system, not a document. Risk management under Article 9 requires continuous monitoring throughout the system's lifecycle, not a one-time assessment. Data governance under Article 10 means ensuring training data is representative, free from errors to the extent possible, and does not encode discriminatory bias — and that you can evidence this to a regulator. Human oversight under Article 14 means building interfaces and workflows that allow humans to meaningfully understand, monitor, and override AI decisions, not merely adding a rubber-stamp approval step.

The documentation burden is substantial. The EU AI Act's Article 11 requires comprehensive technical documentation covering system architecture, training data, testing methodologies, and performance metrics. For most teams, the gap is not conceptual — it is operational. Documentation must be produced before market entry and kept current throughout the system's deployment. The Annex III list of high-risk applications is not static — the European Commission has authority to update it based on technological developments — so the inventory process must be embedded as an ongoing operational practice rather than a sprint deliverable.

Three Common Misclassifications

"All AI is high-risk." It is not. Spam filters, recommendation engines, productivity tools, and most AI features in consumer applications that do not touch the Annex III domains sit in the minimal-risk tier with no mandatory compliance obligations. Organisations that treat all AI as high-risk waste compliance resources and slow down low-risk product development unnecessarily.

"Only big tech is affected." The Act's scope is defined by what AI systems do and where they are deployed, not by company size. For small developers, identifying whether your application qualifies as high-risk is crucial, as this classification triggers the most extensive compliance obligations. SMEs face proportionally lower maximum fines, but the classification and documentation obligations are the same regardless of company size.

"Chatbots are always regulated as high-risk." A general-purpose chatbot carries transparency requirements — users must know it is AI — but not the full Annex III compliance stack unless it is deployed in a high-risk context. The same LLM configured as a medical symptom triage tool is high-risk. Context determines classification.

High-Risk AI Compliance Checklist

Risk Management System (Article 9): Documented risk management process covering the entire AI lifecycle. Identification and analysis of known and foreseeable risks. Risk evaluation procedures in place and evidenced. Residual risk levels that can be accepted or mitigated.

Data Governance (Article 10): Training, validation, and testing datasets documented with known limitations. Bias examination and mitigation steps recorded. Personal data handling aligned with GDPR requirements. Data provenance traceable for regulator review.

Technical Documentation (Article 11 + Annex IV): System architecture documented including all model components. Intended purpose, deployment context, and affected populations defined. Performance metrics and accuracy benchmarks recorded. Change management log maintained from initial deployment.

Transparency and Human Oversight (Articles 13–14): Clear disclosure to deployers of the system's capabilities and limitations. Human oversight interfaces designed and operational — not theoretical. Ability for authorized persons to intervene, override, or halt the system confirmed.

Monitoring and Post-Market Controls (Article 72): Automatic logging of system operation sufficient to enable post-hoc review. Serious incident reporting procedures established. Performance monitoring against declared accuracy and reliability benchmarks. Periodic review cycles defined.

Conformity and Registration: Conformity assessment completed before market entry. Registration in the EU AI Act public database under Article 49. CE marking where applicable for Annex I systems.

Manual Classification vs. Automated AI Governance

Most organisations begin AI classification manually — legal counsel reviews product descriptions against the Annex III categories, and engineering documents systems in spreadsheets. This approach is adequate for small portfolios and initial classification exercises, but it degrades rapidly as AI system counts grow.

Many organizations attempt to govern AI through policy documents and spreadsheet trackers. This approach works briefly but breaks catastrophically as AI portfolios grow. Spreadsheets have no version control, no audit trails, and can't be searched programmatically. When regulators request evidence of compliance with specific requirements, finding relevant information takes weeks.

The more fundamental problem is velocity. AI systems are retrained, updated, and redeployed continuously. A system that was classified as not-high-risk twelve months ago may have expanded into an Annex III domain through feature additions no one flagged to the compliance team. Governance needs to be embedded in the product lifecycle, not bolted on before an audit.

Automated AI governance platforms address this through continuous monitoring, automated impact assessments, and audit-trail generation that keeps pace with development cycles. The tradeoff is implementation cost and the risk of over-relying on tooling that still requires human judgment for genuinely ambiguous classifications. The two approaches are not mutually exclusive: most mature compliance programmes use governance tooling to manage scale while reserving manual legal review for novel or borderline cases. Understanding how AI governance framework tools translate regulatory requirements into operational controls is useful context before committing to a platform.

FAQ

What is considered high-risk AI under the EU AI Act?

A system is high-risk if it falls into one of two categories: it is embedded in or constitutes a regulated product requiring third-party conformity assessment under EU harmonisation legislation (Annex I), or its intended use matches one of the eight sensitive application domains listed in Annex III — including biometric identification, employment decisions, credit assessment, critical infrastructure, educational scoring, and law enforcement. The Annex III classification is presumptive: your system is high-risk unless you can document that it does not materially influence decision-making affecting people.

Are chatbots high-risk under the EU AI Act?

Generally no — a customer service chatbot or LLM assistant carries transparency requirements (disclosure that it is AI) but not the full high-risk obligation stack. The exception is when the chatbot is deployed in a high-risk context: a chatbot that determines eligibility for public benefits, provides medical triage guidance, or makes binding decisions in a law enforcement or migration setting would be high-risk. Deployment context, not technology type, determines classification.

Does SaaS count as high-risk AI?

SaaS products can be high-risk AI systems if their functionality matches Annex III use cases. A SaaS HR platform with AI-powered CV ranking is high-risk. A SaaS writing assistant is not. The Act applies to any organisation placing AI systems on the EU market, regardless of whether the product is cloud-based — SaaS architecture provides no exemption from scope.

What is Annex III?

Annex III is the list of eight high-risk use case categories built into the EU AI Act. Any AI system whose intended use falls into one of these categories is presumptively high-risk, subject to the narrow derogation for systems that demonstrably do not influence decision-making. The Commission has authority to update Annex III over time, meaning classification is not a one-time exercise.

How do I know if I need to comply by August 2026?

If you place AI systems on the EU market — regardless of where your company is headquartered — and those systems fall under Annex III categories, the August 2, 2026 deadline applies. The regulation's extra-territorial reach mirrors the GDPR: any organisation, regardless of location, must comply if its AI systems are used within the EU or produce outputs that affect EU residents. Start with an AI system inventory, classify each system against Annex III, and prioritise compliance build-out for any system that hits a high-risk category.

The August 2026 deadline for high-risk AI system compliance is now five months away. For most organisations, the gap between current state and compliant state is not a documentation problem — it is an operational one.

The classification exercise above tells you where you stand. The 90-day EU AI Act compliance playbook translates that classification into an actionable compliance programme, covering AI inventory, impact assessments, technical controls, and the governance infrastructure needed to produce auditable evidence when regulators ask for it.

Get Started For Free with the

#1 Cookie Consent Platform.

No credit card required

Does Your Software Count as High-Risk AI? A Practical EU AI Act Guide

Your engineering team just shipped a new AI feature. It screens job applicants, ranks them by predicted fit, and surfaces a shortlist for the hiring manager. You didn't think of it as "regulated" — it's just a scoring model. But under the EU AI Act, which enters full enforcement on August 2, 2026, that feature is almost certainly a high-risk AI system — subject to mandatory risk assessments, technical documentation, human oversight mechanisms, and EU database registration before it touches a single CV.

- AI Governance

WCAG Cookie Banner Requirements: Make Your Consent Accessible and Compliant

Your legal team just signed off on the cookie banner. Your developer shipped it. It blocks tracking scripts before consent, offers a Reject All button, and logs every choice. On paper, it is GDPR-compliant.

- Data Protection

- Privacy Governance

Privacy Engineering Best Practices: How to Build Privacy Into Systems by Design

Your product team is three days from shipping a feature that processes location history, inferred demographics, and browsing behaviour to power personalised recommendations. Legal reviews the privacy notice. Security scans for vulnerabilities. Nobody asks the fundamental question: should this system collect all of this in the first place, and if it does, what happens to the data when a user asks for it to be deleted?

- Data Protection

- Privacy Governance