FRIA Guide: Conducting Fundamental Rights Impact Assessments under the EU AI Act

Your organisation has been using an AI-powered tool to screen job applicants for the past 18 months. The system ingests CVs, scores candidates on a composite of attributes, and surfaces a ranked shortlist to hiring managers. Until recently, this was a product decision. From August 2, 2026, it is a legal obligation: under Article 27 of the EU AI Act, deployers of high-risk AI systems in employment and similar domains must conduct a Fundamental Rights Impact Assessment before putting that system into use — and they must notify the competent national market surveillance authority of the results.

If that assessment has not been completed, the non-compliance carries fines of up to €15 million or 3% of global annual turnover. That is not a theoretical risk. It is a deadline that has either been met or missed.

TL;DR

- The FRIA (Fundamental Rights Impact Assessment) is a mandatory pre-deployment assessment under EU AI Act Article 27, required for specific categories of deployers of high-risk AI systems listed in Annex III.

- It is broader than a GDPR DPIA: it covers all fundamental rights under the EU Charter, not just data protection — including non-discrimination, human dignity, access to justice, and freedom of expression.

- The assessment must be completed before first deployment, results must be notified to the national market surveillance authority, and it must be updated whenever material circumstances change.

What Is a FRIA?

A Fundamental Rights Impact Assessment is a structured evaluation that deployers of certain high-risk AI systems must conduct to identify, assess, and mitigate the potential impacts of those systems on the fundamental rights of individuals and groups likely to be affected. The obligation is established by Article 27 of Regulation (EU) 2024/1689 — the EU AI Act — and it targets the deployer rather than the developer.

That distinction matters. The AI Act separates providers (the organisations that build and place AI systems on the market) from deployers (the organisations that put those systems to use under their own authority). A bank that licences a third-party credit scoring model is a deployer. A hospital system implementing an AI triage tool from a health tech vendor is a deployer. A public employment service using an AI matching system is a deployer. The provider may have done all the technical conformity work required of them under Articles 9 through 15. That work does not substitute for the deployer's FRIA. The deployer is closer to the actual impact point — they know the specific context of use, the populations affected, and the operational decisions the AI system will inform — which is precisely why the Act places this obligation on them.

The scope of the FRIA extends well beyond what a GDPR Data Protection Impact Assessment covers. A DPIA under GDPR Article 35 examines risks to individuals' rights and freedoms arising from the processing of their personal data. A FRIA examines potential impacts on the full range of fundamental rights enshrined in the EU Charter of Fundamental Rights — including the right to non-discrimination, human dignity, freedom of expression, the right to an effective remedy and fair trial, and workers' rights — for all individuals affected by the system, not only those whose data is processed. A surveillance system that affects the freedom of movement of people who never interact with it directly, or a social benefits eligibility algorithm that affects individuals who have no direct relationship with the AI system, both fall within the FRIA's scope in ways they would not under a DPIA alone.

Who Must Conduct a FRIA?

The FRIA obligation is not universal across all deployers of high-risk AI. Article 27 identifies two categories of deployers who are specifically required to conduct one.

The first and broader category is deployers that are bodies governed by public law, or private entities providing public services. This encompasses all public authorities — government agencies, municipal bodies, regulatory entities, public healthcare providers, public universities — deploying high-risk AI systems across most of the Annex III categories. It also covers private organisations delivering services of a public character: private companies providing social housing allocation, private healthcare providers, private educational institutions. The rationale is that when an AI system informs or makes decisions affecting access to public services, legal entitlements, or civic participation, the potential for systematic rights violations is structurally higher and the accountability obligation correspondingly greater.

The second category applies regardless of public or private status: deployers of AI systems for creditworthiness evaluation, credit scoring, or risk assessment and pricing for life and health insurance — the Annex III point 5(b) and (c) categories. A fintech company scoring loan applicants, an insurance platform pricing life policies through an AI underwriting model, or a bank using algorithmic credit assessment all fall here.

One category of Annex III high-risk systems is explicitly exempt from the FRIA obligation: AI systems used as safety components in the management of critical digital infrastructure, road traffic, and utilities supply. This is a narrow carve-out. The AI system managing smart traffic flow through a city's road network does not trigger a FRIA. The AI system assessing job candidates for positions at that city's transport authority does.

Understanding the full EU AI Act risk classification framework — and where your AI system sits within it — is the prerequisite for determining FRIA applicability. Organisations that have not yet inventoried their AI systems by risk category are almost certainly operating without the visibility they need to assess their Article 27 exposure.

The Five-Step FRIA Process

Step 1: Map the AI System and Its Deployment Context

The FRIA begins with a precise description of the system as deployed, not as it functions technically in isolation. Article 27(1)(a) requires the deployer to document the processes in which the high-risk AI system will be used within each specific context and purpose of use, as well as the period of time over which it will be used. This is more demanding than it appears. The same AI system may behave differently, and carry different rights implications, depending on whether it is used by a large public authority processing millions of applicants, or a small private employer processing fifty. The deployment context — scale, decision type, affected populations, frequency of use, and the degree to which the system's output is determinative rather than advisory — must all be captured.

The system description should identify what data the AI system takes as input, what it produces as output, and how that output enters decision-making processes. If a hiring AI produces a ranked list and hiring managers routinely adopt the top recommendation without review, the system is effectively making the hiring decision. If the ranked list is one input among many reviewed carefully by trained staff, the human oversight dynamic is different. Both scenarios may be Annex III high-risk, but the rights analysis and required mitigations diverge significantly.

Step 2: Identify Affected Groups and Map Rights Exposure

The FRIA must identify the categories of persons and groups likely to be affected by the deployment, and the specific risks of harm likely to impact them. This requires thinking beyond the direct users or subjects of the AI system to consider indirect effects. A loan denial algorithm affects not only the applicant but potentially their household and dependants. A content moderation system affects not only the account holder but the broader audience that would have received the content. An employment matching system affects workers who are never surfaced to employers, not just those who receive offers.

For each identified group, the FRIA maps which fundamental rights are at potential risk. The EU Charter covers rights across six title areas — dignity, freedoms, equality, solidarity, citizens' rights, and justice — and the assessment should systematically work through those most relevant to the system in question. For employment AI, non-discrimination under Article 21 is primary: does the system produce outputs that systematically disadvantage individuals on the basis of gender, age, ethnicity, or disability? For credit scoring AI, the right to effective remedy under Article 47 is directly implicated: can an individual understand why they were scored as they were, and challenge that outcome? For public benefit eligibility systems, the right to good administration under Article 41 requires that decisions be made impartially, within a reasonable time, and with reasons given.

Step 3: Risk Analysis and Mitigation Planning

Once the rights exposure map is established, the FRIA must assess the severity and likelihood of each identified risk, and specify the technical and organisational measures to be taken in the case that a risk materialises. Severity should be assessed along two dimensions: how significant the harm to the affected individual or group, and how many individuals are affected. Likelihood should account for system performance on protected group characteristics, the availability of redress mechanisms, and the degree of human oversight in the decision process.

Mitigation measures come in several forms. Technical controls include algorithmic bias testing across protected characteristics before deployment, output confidence thresholds below which the system escalates to human review rather than automated decision, and explainability mechanisms that enable affected individuals to understand and contest outputs. Organisational controls include trained human oversight roles with genuine authority to override system recommendations, complaint and appeal pathways that are accessible and operational, staff training on the system's limitations and known failure modes, and regular post-deployment auditing of outcomes by affected group. Vendor and third-party considerations must be explicitly addressed: if the AI system is licensed from a provider, what documentation, bias testing results, and performance disclosures has the provider supplied? The AI Act requires providers to make their technical documentation and instructions for use available to deployers precisely so that deployers can conduct their FRIA on a factual basis. The technical documentation that CTOs and AI teams must obtain from providers before deployment is a direct input into the FRIA risk analysis.

Step 4: Document Findings in a Structured FRIA Report

The FRIA output must be documented in a form suitable for submission to the national market surveillance authority and for internal audit purposes. While the EU AI Office is required by Article 27(5) to publish a template questionnaire — including an automated tool — to facilitate this documentation, that template had not been published as of early 2026. Organisations should not wait for it. The Article 27(1) requirements are sufficiently specific to support documentation now, and the template, when published, will require mapping existing assessments to it rather than starting from scratch.

A compliant FRIA document contains a system description covering the deployment context, purpose, and frequency of use; a stakeholder and affected group analysis identifying who is affected and how; a rights exposure matrix mapping relevant Charter rights to identified risks with severity and likelihood ratings for each; a description of the mitigation measures applied and planned; a human oversight structure specifying who is responsible, what authority they have, and what training they have received; and a monitoring and review plan specifying how the assessment will be updated. The document should include an executive summary accessible to board-level review, since the FRIA will need to be presented internally for governance approval before deployment.

Step 5: Cross-Functional Review, Approval, and Notification

The FRIA must be reviewed by individuals with the competence to assess both the technical dimensions of the AI system and the legal and ethical dimensions of the rights analysis. In practice, this means a cross-functional team: legal or privacy counsel to assess rights implications, technical staff or the AI system provider to validate system description and limitation disclosures, domain experts who understand the operational context (HR professionals for a hiring system, loan officers for a credit system), and a representative with accountability for the organisational decision to deploy. Where the affected populations include potentially vulnerable groups — children, individuals with disabilities, people in financially precarious circumstances — involving external stakeholders or advocacy groups in the review strengthens both the quality of the assessment and its defensibility under regulatory scrutiny.

Once reviewed and approved internally, the results must be notified to the competent national market surveillance authority designated in the deployer's EU Member State. The exception is narrow — limited to exceptional circumstances of public security where the authority has specifically granted an exemption. For all standard deployments, notification is mandatory. Failure to notify is a compliance failure distinct from failure to conduct the FRIA.

FRIA vs DPIA: The Integrated Approach

Many organisations that deploy high-risk AI systems will need both a FRIA under the AI Act and a DPIA under GDPR Article 35 for the same system. High-risk AI systems almost invariably process personal data in ways that trigger DPIA requirements — systematic evaluation of individuals, use of new technology, processing of sensitive data categories, or automated decision-making with significant effects.

Article 27(4) of the AI Act explicitly addresses this overlap, stating that where a DPIA has already been conducted, the FRIA shall complement it. The most efficient approach is to conduct a unified assessment that addresses both frameworks simultaneously, using the DPIA as the foundation and extending it to cover the broader FRIA dimensions. The DPIA covers the data protection risk analysis, the legal basis assessment, the data minimisation and retention review, and the data subject rights implementation plan. The FRIA extends that foundation to cover the algorithmic bias analysis, the non-discrimination risk across protected characteristics, the broader Charter rights mapping, the explainability documentation, the human oversight structure, and the post-market monitoring plan.

A comprehensive DPIA conducted with AI systems in mind will typically satisfy 30 to 40 percent of what the FRIA requires. Structuring DPIA workflows so they automatically extend to FRIA dimensions for AI systems avoids the inefficiency of parallel assessment processes and produces a single, coherent governance document that addresses both regulatory frameworks.

The key difference to remember: a DPIA is concerned with the data subjects whose personal data is processed. A FRIA is concerned with everyone who is affected by the AI system's outputs, regardless of whether they are data subjects.

Common FRIA Mistakes

Conducting the FRIA after deployment is the most straightforward compliance failure. Article 27 is explicit: the assessment must be completed before the system is put into service. A retrospective FRIA completed after an AI system has been running for months satisfies neither the timing requirement nor the purpose of the obligation — which is to identify and mitigate risks before they materialise.

Limiting the FRIA to a data protection lens is the second most common error. Teams familiar with DPIAs tend to replicate that structure when asked to conduct a FRIA. But non-discrimination, freedom of expression, access to justice, and workers' rights must be assessed even if the system processes no special category personal data. An AI employment screening tool that processes only age and job history, with no sensitive data categories, still carries direct exposure under the Charter's non-discrimination provisions.

Failing to involve affected groups produces assessments that are technically complete but analytically thin. The populations most likely to experience adverse impacts from high-risk AI systems — those already subject to discrimination, those in vulnerable financial positions, those with limited access to legal remedies — are rarely represented in the internal teams conducting the assessment. Consulting with representative organisations, civil society bodies, or subject matter experts who work with affected communities produces better risk identification and more credible documentation.

Treating the FRIA as a one-time exercise is structurally inconsistent with the AI Act's requirements. The assessment must be updated when the deployer determines that any of the assessed factors have changed or are no longer current. For machine learning systems with continuous or periodic retraining, where model behaviour in production can drift meaningfully from the version originally assessed, the FRIA must be a living document connected to the system's operational reality. Building AI governance infrastructure that maintains continuous documentation and flags material changes requiring reassessment is the only operationally sustainable approach at any significant deployment scale.

FAQ

What does FRIA stand for?

Fundamental Rights Impact Assessment. It is the assessment required under Article 27 of the EU AI Act for certain deployers of high-risk AI systems to evaluate potential impacts on fundamental rights protected under the EU Charter of Fundamental Rights.

Is the FRIA mandatory under the EU AI Act?

Yes, for deployers that are bodies governed by public law, private entities providing public services, and deployers of AI systems for creditworthiness or life and health insurance risk assessment. The compliance deadline for Annex III high-risk systems is August 2, 2026.

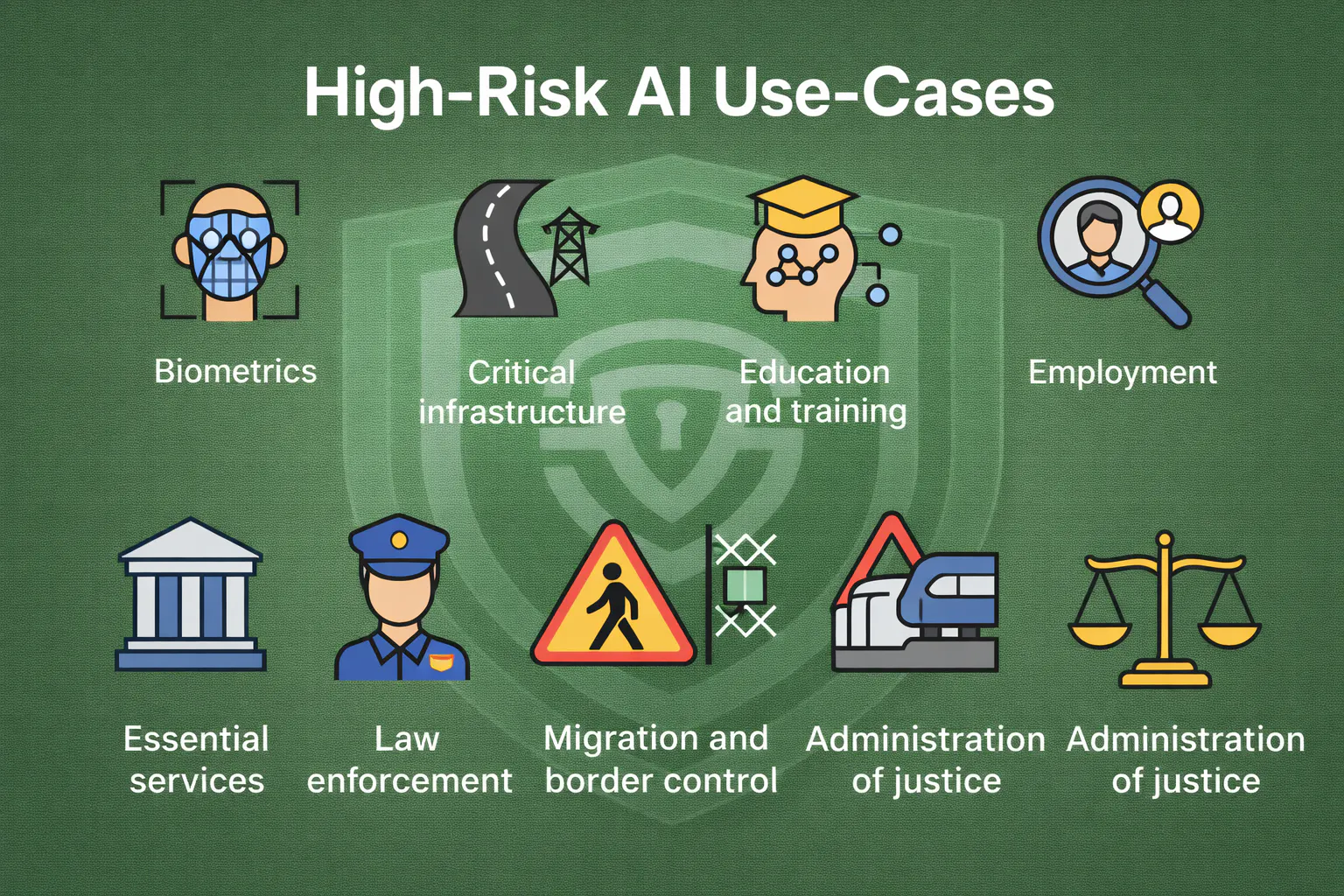

Which AI systems trigger the FRIA obligation?

High-risk AI systems listed in Annex III of the AI Act, covering biometrics, education, employment and worker management, access to essential services (including credit scoring and insurance), law enforcement, migration, and administration of justice — with the exception of critical infrastructure safety components, which are exempt.

How long does a FRIA take to complete?

It depends on the system's complexity and the organisation's existing documentation. For organisations with a mature DPIA process and complete technical documentation from the AI provider, a well-structured FRIA may take two to four weeks. For organisations starting from scratch — no AI system inventory, no existing impact assessment infrastructure — six to twelve weeks is more realistic once all required information is assembled.

Can FRIA findings replace other risk assessments?

No. A FRIA complements rather than replaces a DPIA. Where both are required, the most efficient approach is an integrated assessment addressing both frameworks in a single structured document. The FRIA is specifically concerned with the broader fundamental rights dimensions that a DPIA does not cover.

What happens if you don't comply?

Non-compliance with Article 27 constitutes an infringement of Chapter III of the AI Act, subject to administrative fines of up to €15 million or 3% of global annual turnover. National market surveillance authorities will have full investigation and enforcement powers from August 2026.

The August 2, 2026 deadline for Annex III high-risk AI system obligations is not a compliance ambition. It is a legal threshold that, once passed, exposes organisations to fines, enforcement orders, and the operational disruption of being required to modify or discontinue deployed systems. If your organisation is a deployer of high-risk AI, the question is not whether to conduct a FRIA — it is whether you have the documentation infrastructure to conduct one rigorously and maintain it as your systems evolve.

Start with your AI system inventory. See how Secure Privacy's AI governance platform helps organisations conduct and document Fundamental Rights Impact Assessments, integrate FRIA workflows with DPIA processes, and maintain audit-ready evidence for EU AI Act compliance.

Get Started For Free with the

#1 Cookie Consent Platform.

No credit card required

FRIA Guide: Conducting Fundamental Rights Impact Assessments under the EU AI Act

Your organisation has been using an AI-powered tool to screen job applicants for the past 18 months. The system ingests CVs, scores candidates on a composite of attributes, and surfaces a ranked shortlist to hiring managers. Until recently, this was a product decision. From August 2, 2026, it is a legal obligation: under Article 27 of the EU AI Act, deployers of high-risk AI systems in employment and similar domains must conduct a Fundamental Rights Impact Assessment before putting that system into use — and they must notify the competent national market surveillance authority of the results.

- AI Governance

Does Your Software Count as High-Risk AI? A Practical EU AI Act Guide

Your engineering team just shipped a new AI feature. It screens job applicants, ranks them by predicted fit, and surfaces a shortlist for the hiring manager. You didn't think of it as "regulated" — it's just a scoring model. But under the EU AI Act, which enters full enforcement on August 2, 2026, that feature is almost certainly a high-risk AI system — subject to mandatory risk assessments, technical documentation, human oversight mechanisms, and EU database registration before it touches a single CV.

- AI Governance

WCAG Cookie Banner Requirements: Make Your Consent Accessible and Compliant

Your legal team just signed off on the cookie banner. Your developer shipped it. It blocks tracking scripts before consent, offers a Reject All button, and logs every choice. On paper, it is GDPR-compliant.

- Data Protection

- Privacy Governance